If you know me or follow this blog at all you will probably know a few things about me: 1) I really like working with the latest technology and 2) I have a long history with Education and Learning. It just so happens that a project I am working on now, that is using some “cutting edge” technology, takes me back about 18 years to a project I worked on in the summer of 1992. I am talking about “hypervideo”, though in 2010 we are now doing it with streaming high-definition video instead of laserdisc recorders.

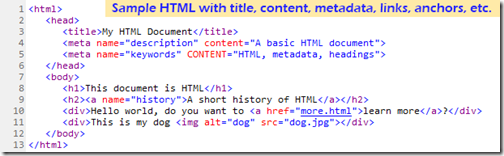

What did “hypertext” do for “text” with HTML?

We are so used to hypertext and the world-wide web, that we really don’t think about the technology and features behind it any more, but let’s take a second to “review the obvious”. The hypertext-markup language (HTML) is the coding behind the world-wide web. It is used to take the raw text and put a structure around the text and within the text. What started as a simple text file now gains things like:

We are so used to hypertext and the world-wide web, that we really don’t think about the technology and features behind it any more, but let’s take a second to “review the obvious”. The hypertext-markup language (HTML) is the coding behind the world-wide web. It is used to take the raw text and put a structure around the text and within the text. What started as a simple text file now gains things like:

- a “title”,

- “headings”,

- “hyperlinks”,

- navigation,

- sections/anchors,

- a unique identifier (uniform resource identifier (URI)),

- and data-about-the-data or “metadata”.

Users of these pages never really see any of this information, but they do appreciate it and use it all the time. They can enter an address like www.nbcolympics.com and be taken directly to the online Olympic coverage from NBC (and how little most people appreciate how easy this is for them to do now. Users can “bookmark” or “favorite” particular pages and get back to them whenever they want to. Better yet, they can use Google or Bing to type in a few words or a phrase and often find exactly what they are looking for. Search engines use the titles, headings, and metadata in the page in their search routines to find these pages. And let’s not forget those wonderful underlined blue hyperlinks and linked buttons on pages that allow us to jump from page to page, and often find great resources and pages that we never knew that we were even interested in.

The problem with non-text items on web pages is that it is hard for computers to figure out what they are – if you put a picture of your dog on a web page it is still very hard for a browser or search engine to “look” at the picture and file it under “dog.” With words you can often tell what they mean from the context of the words that precede it or follow it; so this helps. Today, images can contain an “alt” attribute where you can describe the picture in words. This was originally though to be for browsers that did not support images or for screen readers for visually impaired users of the web, but now serves very well to help identify images to search engines.

What if we could do similar things for video?

But what about video? Sites like YouTube, Facebook, and Vimeo enabling the upload of tens of thousands of hours of video. Each video contains a title and description which helps enable search to find the video. But what about what is inside the video. A single video may have many distinct sections or chapters where information is presented logically. In each section there could be textual information describing what is going on in the video (think closed captioning or even the Descriptive Video Service). What if you could not only search to find the video, but also find a particular time-code within the video (e.g. where the bit of text you searched for occurred in the video)? What if you could embed hyperlinks to other videos, other timecodes, other pages or notes, etc. within the video itself, so that they would appear or become available at a particular time when the video was playing?

Well, we can.

TUVA

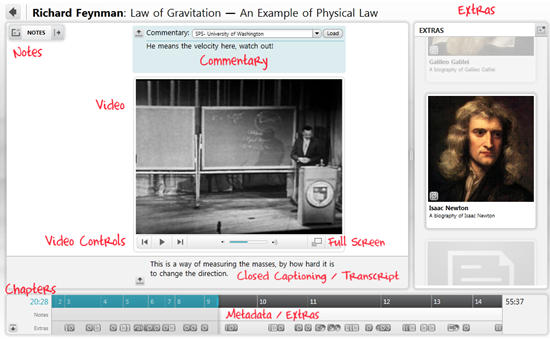

My favorite current example of hypervideo ideas is Project TUVA . For one, the main content is Dr. Richard Feynman’s wonderful physics lecture series at Cornell in the ‘60s (and who doesn’t like some good physics every so often). But, for me, it is also nice to know that it is built with Silverlight, which is a toolset that I am very aware of, so I know I could use many of these features myself down the road.

At the core of the player you will see something that looks very common for videos on the internet. There are the standard “VCR” controls, volume, and full-screen buttons – nothing really special so far.

But it doesn’t take long to see just how much else can be added to the video player when you start thinking about hypervideo concepts of linking, navigation, chapters, notes, and more.

Chapters.

The first thing I noticed about the player was the expanded navigation and information timeline on the very bottom (I would really encourage you to open this site if possible because experiencing this live will help you understand this much more than a few static images and my attempt to describe the interactions with words). Each of the seven videos in Feynman’s lecture series are broken up into logical “chapters” with chapter titles like “Newton” and “Electricity”. This provides much the same functionality of HTML headings and anchor tags. You can quickly see the structure of the video and jump to any chapter from this navigation bar.

Notes / Expert Commentary

Since this player was designed for an educational setting, the ability for the viewer to add their own notes in a left-side panel was added. This allows for anyone watching the video to add a note at a particular time in the video which they will be able to review later. In addition to this, you can load other people’s notes files and see what they were thinking during the video. This feature allows for the addition of “expert commentary” within the video frame – kind of like on a DVD where some allow you to turn on an audio track that includes the director and/or other people involved with the movie. This would also allow a teacher to include their own comments and instructions for students watching the video – and yes, these are also time-stamped so they can become clickable as well to add another layer of navigation.

Closed Captioning / Transcript

We’ve all seen closed-captioning on television shows where what is being said in a particular show is displayed on the screen for those who are hearing impaired or for situations where the ambient noise in a room is such that the television can’t be heard.

The “Tuva” interface takes closed-captioning one step further and turns it into a full transcript of the talk being given. You can literally read through all the different close captioning entries in a scrollable textbox. Not only can you read through the captioning, but each of the captions itself is now a hyperlink that will take you to the time in the video where that caption was on screen.

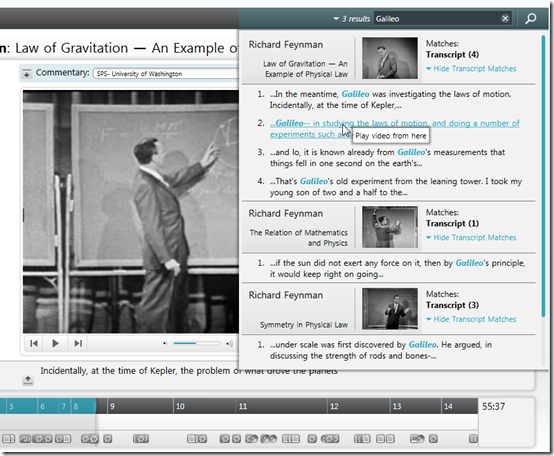

But wait. If we have all this text now, linked to the video, can’t we search this too? Yes.

Search

The interactive search box in the “Tuva” interface will allow the user to search the transcripts of all of the chapters of all of the videos for a keyword or phrase. Then all of the “hits” can be displayed, and yes, they are clickable hyperlinks.

Links / Extras

The other notable feature of this interface is the “Extras” which are shown at the very bottom of the screen. Embedded into the video are bits of metadata which enable the inclusion of “notes” and “links” within the video itself. Each extra can have an associated icon or image which becomes visible in the “Extras” pane on the right at the appropriate time in the video. For a “note” type extra, this can pop-over a panel that includes extra information about a particular topic being discussed. The viewer can click on the icon to read this note, which pauses the video until they are done. Similarly clicking on a “link” extra will take the user to another part of the site or even off of the site to a page that explains a topic in more detail. For example, if Feynman is discussing Albert Einstein, and the viewer knows a lot about Einstein, then they can ignore the picture of Einstein in the Extras panel. If they are less familiar with Einstein, they can click on the icon and be taken to a new page that discusses the works and life of Einstein in detail. When they are ready, the viewer can close the page and return to the video which can then proceed from where they left off.

NBC Olympics

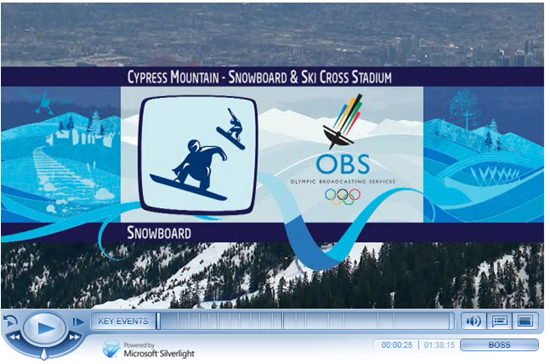

But how might this technology look in a less “academic” and non-research or prototype situation – how about the 2010 Winter Olympics?

NBC created an online player that would stream live and pre-recorded events to viewers everywhere. They created a nice “blue-ice” themed player with all the functions you would expect from a modern player. A Play/Pause button, Fast Forward, Rewind, Jump Back, Volume, Full-Screen, and even a humorous “Boss” button that filled your screen with a Windows 7 desktop with an open Excel spreadsheet – cute.

But if you look a little closer you will see some components are are not, yet, very typical of video players online.

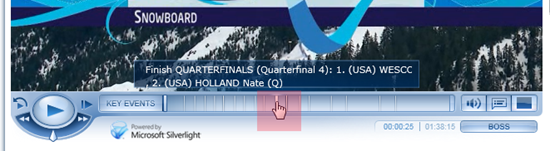

Clicking on the “Key Events” button for this Snowboarding video pops-up a scrollable list of all of the heats within this pre-recorded event. So that if I scroll through the list and want to find the round with Nick Baumgartner from the USA, I can find him in Heat 8 – clicking on this item takes me directly to that time in the video.

Similarly, the time bar at the bottom of the player has small lines at particular times, that you might not even see if you weren’t looking for them. In this example I was trying to find the quarter-final with Wescott and Holland from the USA – clicking on that bookmark took me directly to that point.

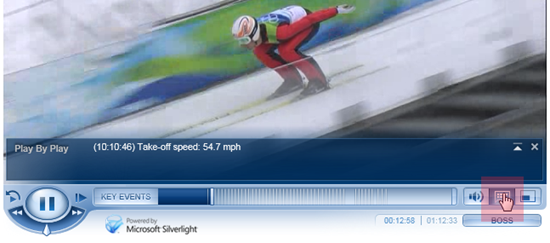

One more interesting use of “metadata” here is the “Play By Play” pane that you can also call up on the player. This allows for you to see interesting details about each event as it occurs. For example here, we can see that the skier has achieved 54.7mph on the ramp before taking off – crazy.

How is it Done?

So, great, this is a cool technology and I’ve got a bunch of ideas on how it could be used. What tools do I need to start building my own hypervideo projects? Stay tuned for a near future post on this question and some tips to get you started.

Great article Bruce and nice reference to "VCR" 🙂

Where to standards like MPEG-7 come into play? Are you utilizing MPEG-7 in the projects you’re working on? Do you know if NBC and Tuva are using MPEG-7?

Dave

I have to mention that I like Windows 7. That OS runs very smooth. Even the 64 bit version runs smooth. When Windows Vista came out a lot of software wasn’t adapted yet for 64bit. Even though 64bit was allready there when XP was the king. Now most prgrams has a 64bit version. Games also run better on Windows 7. It’s almost like gaming on XP. Nice work from MS after problem Vista. And best of all, you don’t need a high end <a href="http://comwares.be">system</a>.

Very good article. I’ve found your blog via Bing and I’m really glad about the information you provide in your posts. Btw your sites layout is really messed up on the Kmelon browser. Would be great if you could fix that. Anyhow keep up the great work!

I like your post..really informative.. thanks writer

This article is very useful for me, I will always wait for the next article. thanks for sharing information

Admiring the time and effort you put into your blog and detailed information you offer! I will bookmark your blog and have my children check up here often. Thumbs up!

I fully agree with author opinion.

Heya I am having issues looking for video game design courses. Any advice on a solid site to look ?

i think you have a pretty nice blog here… today was my first time coming here.. i just happened to find it doing a google search. anyway, excellent post.. i’ll be bookmarking this site for sure.

Bill you are absolutely proud of your work as you should be. It is very well delivered. appreciation for the time you put into this. I will dig and try to find some complimenting facts to add. Thanks again.

Ya know I think plo is my fav game.

Useful information, many thanks to the author. It is puzzling to me now, but in general, the usefulness and importance is overwhelming. Very much thanks again and good luck!

Nice article. There’s a lot of good info here, though I did want to let you know something – I am running Redhat with the latest beta of Firefox, and the layout of your blog is kind of bizarre for me. I can read the articles, but the navigation doesn’t work so great.

I never in a million years would have thought at things like this. This should make my life a lot easier.

It becomes obvious that there is far more to know about it as I expected. I think you have made lots of good points in your post.

I’m so glad to have found your web page. My pal mentioned it to me before, yet never got around to checking it out until now. I must express, I’m floored. I really enjoyed reading through your posts and will absolutely be back to get more.

Please let me know if you are looking for a writer for your website. You have some good articles and I think I would be a good asset.

i think you have a pretty nice page here… today was my first time coming here.. i just happened to find it doing a google search. anyway, great post.. i’ll be bookmarking this blog for sure.

I like the blog, but could not find how to subscribe to receive the updates by email. Can you please let me know?

Do have some sort of email system where your blog posts emailed to me?

Thank you for another fantastic blog. Where else could I get this kind of information written in such an incite full way? I have a project that I am just now working on, and I have been looking for such information… Regards… http://www.pctechoutlet.com

Hello, I have browsed most of your posts. This post is probably where I got the most useful information for my research. Thanks for posting, maybe we can see more on this. Are you aware of any other websites on this subject… Regards.. http://www.pctechoutlet.com

Awesome post I bookmared it on Delicious and submitted on Digg. Hopefully it sends more traffic your way 🙂

I like the blog, but could not find how to subscribe to receive the updates by email.

Do you know where I can watch good quality movies online?

Thanks for your great blog posting! I found your post very interesting, I believe you are a great author. I added your blog to my bookmarks and will come back again. Keep up the brilliant work, have a great day!

I absolutely enjoyed your posting. Will link back again from my search engines website. Please submit additional usually if you’ve time. Gives thanks!

I really enjoyed reading the articles on your blog. I’ll bookmark it so I can read later on.

I think am just having some problems with subscribing to RSS feed here.

I found your weblog on yahoo and discover a couple of of the posts. Great perform!

Thanks for sharing, please keep an update about this info. love to read it more. i like this site too much. Good theme ;).

I normally do not spot in Information sites but your net log pressured me to, extraordinary exercise… Lovely …

For certain agree with what you stated. Your explanation was certainly the easiest one to understand and follow. Will likely be back to get more of info you share here. Thanks

Thank you for another fantastic blog. Where else could I get this kind of information written in such an incite full way? I have a project that I am just now working on, and I have been looking for such information… Regards… http://www.pctechoutlet.com

Do you know where I can watch good quality movies online?